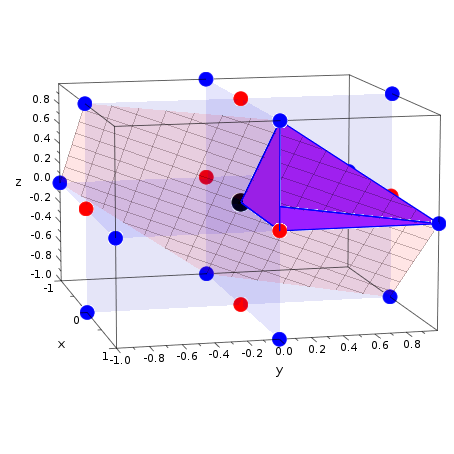

So here’s what we’ll do: we will add a third dimension. However, the vectors are very clearly segregated, and it looks as though it should be easy to separate them. It’s pretty clear that there’s not a linear decision boundary (a single straight line that separates both tags). Sadly, usually things aren’t that simple. Now the example above was easy since clearly, the data was linearly separable - we could draw a straight line to separate red and blue. In other words: the hyperplane (remember it’s a line in this case) whose distance to the nearest element of each tag is the largest.

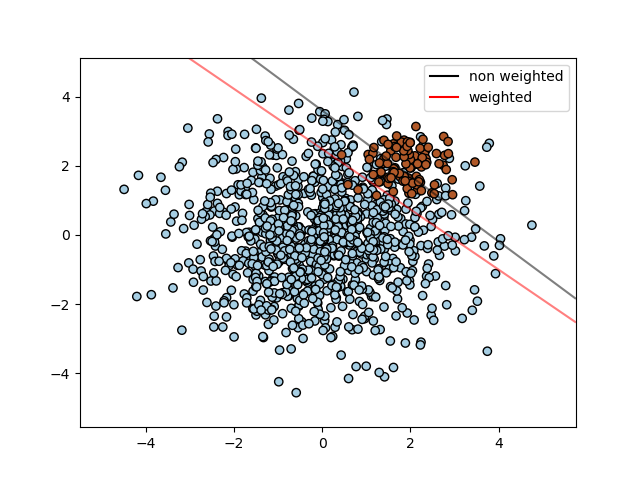

This line is the decision boundary: anything that falls to one side of it we will classify as blue, and anything that falls to the other as red.īut, what exactly is the best hyperplane? For SVM, it’s the one that maximizes the margins from both tags. We plot our already labeled training data on a plane:Ī support vector machine takes these data points and outputs the hyperplane (which in two dimensions it’s simply a line) that best separates the tags. We want a classifier that, given a pair of (x,y) coordinates, outputs if it’s either red or blue. Let’s imagine we have two tags: red and blue, and our data has two features: x and y. The basics of Support Vector Machines and how it works are best understood with a simple example. Support Vector Machines Algorithm Linear Data What that essentially means is we will skip as much of the math as possible and develop a strong intuition of the working principle. I’ll focus on developing intuition rather than rigor. In this tutorial, we will try to gain a high-level understanding of how SVMs work and then implement them using R. However, they are mostly used in classification problems. $$w^T.x+w_0=0\quad\quad\quad \textx+2.5$.In machine learning, support vector machines are supervised learning models with associated learning algorithms that analyze data used for classification and regression analysis. I was wondering if I can visualize with the example the fact that for all points $x$ on the separating hyperplane, the following equation holds true: